AD ≠ AI. That being said, it’s common to benefit from hand-rolled derivatives in tricky calculations.

I do know that AI and AD are not the same. ![]()

The reason I hand differentiate is that this should be more efficient: Then I can declare all coefficients as const and then no further changes are necessary, apart from precalculation of the subgrid using Horner’s method for polynomials (added thanks to @mkitti at an earlier stage), but now evaluated on the exact rationals of the kernels. I also have to use BigInt, otherwise I get integer overflow, and I’m currently just at the 11th degree kernel lower derivatives. But I hope this pays off as efficiency in the end. ![]()

I made this extra plot using Makie.jl, showing the frequency spectra of all the kernels in the package.

All of the :b type kernels are dark colored with a marker, while all :a kernels are lighter.

The ideal sinc filter is shown as well, as a performance comparison.

Please note that I have renamed :b3 --> :a4 in the upcoming release.

The reason for this renaming is that I did not develop this kernel and it is 4th order, not 7th.

After this renaming, all of the :b kernels are 7th order, and all the :a kernels are the previously published ones. ![]()

Hi,

I just wanted to announce that I just released version 0.3.0. of this package. It features substantial improvements and new features, as mentioned above. ![]()

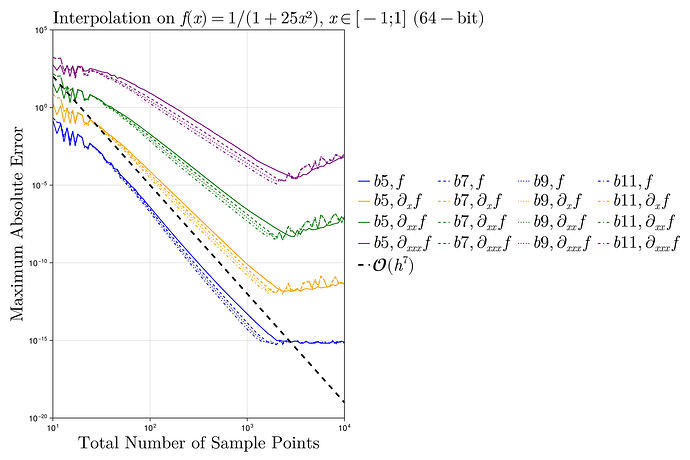

- ‘Predifferentiated’ kernels can deliver up to 6 numerical higher derivatives, without the accuracy loss associated with finite differences, the only drawback being slightly slower convergence. In higher dimensions these derivatives become mixed partial derivatives. Previously I claimed up to C11 continuity which turned out to be false, C6 is the maximum, and better keep it C5 (nicest). This is still remarkable compared to the other kernels from the literature which are C1 at most (to my surprise!) even though the papers claimed they were much better.

- As I also mentioned above I put those derivatives to work using Hermite ‘subgrid’ interpolation between the nearest interpolated points. This allows convergence to machine precision with only 100 precomputed kernel points (vs 10k with linear interpolation). This feature is available in 1D and 2D only, due to tensor product combinatorial explosion.

- I’ve also expanded the readme a lot to better explain everything in detail and added the frequency response.

- Something I think I haven’t mentioned is that the extrapolation composes with itself, meaning you can do nesting of different types of extrapolation, this is also described in the readme.

I hope these updates will be useful. ![]()

New version: v0.4.0 just released:

- It fixes an allocation bug which affected performance of higher dimensional interpolations.

- The package now features support for non-uniform grids in arbitrary dimensions, through the tensor product approach.

- The API remains unchanged: Non-uniform grids are detected internally.

Please refer to the updated readme for details. ![]()

New version: v0.5.0 just released:

- It expands the new non-uniform capabilities to include the higher-order

:bkernels. - This allows smooth interpolation and higher derivatives to be calculated from non-uniform discrete samples.

- Non-uniform

:bkernels precomputation scale in complexity with the number of intervals; For performance critical applications, use the non-uniform kernels once, to resample the data, then use uniform kernels.

In the figure below, only the raw samples are known, yet up to 5 derivatives are recovered accurately:

On uniform grids, kernel weights are the same for every interval. On non-uniform grids, each interval needs its own weights adapted to the local grid geometry. This is achieved by expanding the kernel in a binomial series (using Rational{BigInt} arithmetic to avoid floating-point contamination in the weight generation) around each interval’s local coordinate, then projecting through a Vandermonde system to enforce polynomial reproduction up to the kernel’s design order. The result is a compact set of polynomial coefficients per interval, evaluated via Horner’s method at query time. Ghost point values near domain boundaries are also computed via Vandermonde solves using Rational{BigInt} arithmetic.

New versions v0.6.0, v0.7.0, and v0.8.0 released, quite a lot has happened since v0.5.0!

v0.6.0, Pre-shipped kernel tables Constructor performance improved by up to 44x through pre-shipped kernel tables. The default setup path now requires zero disk I/O, kernel tables for all b-series kernels are shipped as package constants and loaded from memory at startup.

v0.7.0, Lazy evaluation and high-dimensional scaling New lazy=true keyword skips ghost point expansion at construction, computing boundary values on the fly only when needed. Construction becomes constant-time (~0.12 ms) regardless of grid size or dimension, up to 349x faster for :b5 on 4D 30^4 grids. Interior evaluation is identical to eager mode. This release also adds a-series derivatives (:a3 through :a7), nonuniform :a0 (neareast neighbor) and :a1 (linear).

v0.8.0, Antiderivative support Pass derivative=-1 to compute the indefinite integral of the interpolant, zero-anchored at the left domain boundary:

x = range(-1.0, 1.0, length=100)

y = 1 ./ (1 .+ 25 .* x.^2) # Runge's function

itp = convolution_interpolation(x, y; derivative=-1)

itp(1.0) # ≈ 1/5 * atan(1.0*5) + 1/5 * atan(5) # last term is the anchor value

Works in arbitrary dimensions as the mixed partial antiderivative, with zero-allocation O(stencil) evaluation. The package now has 754 tests. Please refer to the updated readme for details. ![]() The figure below shows convergence of the antiderivative of Runge’s function:

The figure below shows convergence of the antiderivative of Runge’s function:

ConvolutionInterpolations.jl v0.9.0, v0.10.0, v0.10.1 – major updates and bugfix

A lot has happened since v0.8.0! ![]() Here is a summary of what is new, most important first.

Here is a summary of what is new, most important first.

v0.10.1 – Major bugfix: all 2D/3D/ND operations now reach machine precision

A fundamental orientation error in the boundary condition ghost point computation has been corrected. When extracting 1D slices for right-boundary polynomial extrapolation in N>1 dimensions, the slice was ordered boundary->interior rather than interior->boundary. This caused the polynomial coefficients to extrapolate in the wrong direction, corrupting ghost points on right boundaries in 2D and higher dimensions.

The fix is a single line:

# Before

workspace.slice[j] = c[idx - workspace.slice_offset[j]]

# After

workspace.slice[j] = c[idx - workspace.slice_offset[n_dim - j + 1]]

All multidimensional operations – interpolation, differentiation up to 6th order, antiderivatives, and any mixed combination – now converge to machine precision in 2D and higher.

Convergence enhancement across dimensions

An unexpected but theoretically satisfying result: integration order compounds across dimensions. With a 7th order 1D kernel (:b11):

| Dimensions | Convergence order |

|---|---|

| 1D | 7th |

| 2D | ~9th |

| 3D | ~11th |

| 4D | ~13th |

A 10x10x10 grid (1000 points) already gives ~1e-4 accuracy for smooth 3D integrands. Machine precision is reached around n=100 per dimension in 3D, and n=40 per dimension in 4D.

This applies to mixed combinations of interpolation, differentiation, and integration.

v0.10.0 – Fast antiderivative and boundary condition improvements

O(eqs) fast antiderivative evaluation

The antiderivative fast path has been fundamentally redesigned. Previously the fast path still looped over the entire coefficient array – O(N) in 1D, O(N^2) in 2D. Now the kernel antiderivative tail (outside stencil support) is precomputed at construction time as prefix/suffix sums, reducing evaluation to O(eqs) in 1D and O(eqs^2) in 2D regardless of grid size.

Speedups at N=200 vs the direct path:

| Kernel | Speedup |

|---|---|

| :a3 | 115x |

| :b5 | 44x |

| :b7 | 1,513x |

| :b11 | 1,957x |

| :b13 | 2,117x |

At N=1000, speedups reach 11,000x for :b13.

:detect boundary condition removed

The :detect kernel boundary condition has been removed. It was unreliable in multiple dimensions – different slices of the same array could receive different boundary conditions, creating asymmetric coefficient arrays. Replace with :polynomial (default, recommended), :auto, :linear, or :periodic.

v0.9.0 – Per-dimension kernels and mixed operations

Per-dimension kernel degrees and derivatives

Both uniform and nonuniform grids now support per-dimension kernel and derivative tuples:

# Different kernel per dimension

itp = convolution_interpolation((x, y), vals; degree=(:b5, :b9))

# Integrate in x, differentiate in y

itp = convolution_interpolation((x, y), vals; degree=:b7, derivative=(-1, 1))

Pretty printing

Interpolation objects now display concise summaries:

FastConvolutionInterpolation(Float64, 2D, coefs=38x42, degree=(:b5, :b9), derivative=(1, 0))

All versions are available via the Julia package registry. The plots below show convergence of 2d and 3d integration (derivative=-1 in 2D/3D).

2D integration (9th order):

3D integration (11th order):

v0.11.0 is out, completing the API cleanup in preparation for v1.0.0.

This release is a breaking change, but migration is straightforward. The main theme is consistency: keyword arguments and struct fields now use clear, concise names, and extrapolation boundary conditions are symbols rather than structs inherited from Interpolations.jl.

# Before

itp = convolution_interpolation(x, y; degree=:b7, kernel_bc=:polynomial, extrapolation_bc=Line())

# After

itp = convolution_interpolation(x, y; kernel=:b7, bc=:polynomial, extrap=:line)

Full list of changes in the release notes. Please refer to the updated README for details. ![]()

Are you sure that the convergence order is actually compounded in higher dimensions? If you interpolate a 2d function that is constant in one direction but variable in the other, e.g. f(x,y) = 1/(1+ x^2), it seems like you would still recover the 1d convergence rate. The rate may appear higher for nice multidimensional functions but the underlying method is still a tensor product of 1d convolutions.

Hi lxvm,

Thank you for your kind response. ![]() You are right to question my claims!

You are right to question my claims!

I put together a script (with support from Claude (Anthropic)) to test more rigorously the claims you question. I posted the script below so you can verify yourself (be warned it is memory intense). As you could see in the plots posted above (for 2D and 3D), for very smooth and nicely behaved functions, the order does actually compound. But that is of course not enough for a general statement, you’re completely correct on that account! The new results from my Julia script on ND Runge’s function are:

For interpolation (roughly 7th order):

Interpolation convergence (kernel=:b7, derivative=0)

f(x,y,...) = 1/(1 + 25*(x^2+y^2+...))

N/dim 1D error 2D error 3D error

100 3.11e-09 6.22e-09 9.32e-09

200 7.27e-11 1.45e-10 2.15e-10

400 3.60e-13 7.21e-13 9.95e-13

order 6.5 6.5 6.6

For integration (roughly 5th order):

Antiderivative convergence (kernel=:b7, derivative=-1 per dim)

Reference: Gauss-Legendre quadrature (n=20 per dim)

N/dim 1D error 2D error 3D error 4D error 5D error

15 1.38e-04 2.79e-05 4.05e-06 4.57e-07 2.62e-08

25 1.11e-06 4.93e-07 1.19e-07 2.35e-08 4.24e-09

40 2.36e-07 4.26e-08 5.94e-09 7.57e-10 9.10e-11

order 4.6 4.7 4.7 4.6 4.1

So you were correct! The order does -not- compound on unfriendly functions like Runge’s. ![]() But notice that the absolute error drops quite dramatically with dimension, roughly 3 orders from 1D to 5D for the same number of points per dimension. Code:

But notice that the absolute error drops quite dramatically with dimension, roughly 3 orders from 1D to 5D for the same number of points per dimension. Code:

using ConvolutionInterpolations, FastGaussQuadrature, Printf

ns_interp = [100, 200, 400]

ns_integ = [15, 25, 40]

test_points_interp = range(0.001, 0.999, length=100)

function convergence_order(e1, e2, n1, n2)

log(e1 / e2) / log(n2 / n1)

end

runge(x...) = 1 / (1 + 25 * sum(xi^2 for xi in x))

# GL reference integral over [0,1]^D

function gl_integral(D, n_gl=20)

nodes, weights = gausslegendre(n_gl)

# map from [-1,1] to [0,1]

x = @. (nodes + 1) / 2

w = weights / 2

total = 0.0

for idx in Iterators.product(ntuple(_ -> 1:n_gl, D)...)

total += prod(w[idx[d]] for d in 1:D) * runge(ntuple(d -> x[idx[d]], D)...)

end

total

end

println("Interpolation convergence (kernel=:b7, derivative=0)")

println("f(x,y,...) = 1/(1 + 25*(x^2+y^2+...))")

@printf("%-6s %-14s %-14s %-14s\n", "N/dim", "1D error", "2D error", "3D error")

errors = Dict(d => Float64[] for d in 1:3);

for n in ns_interp

x = range(0.0, 1.0, length=n)

itp1 = convolution_interpolation(x, runge.(x); kernel=:b7)

e1 = maximum(abs(itp1(p) - runge(p)) for p in test_points_interp)

itp2 = convolution_interpolation((x, x), [runge(xi, yi) for xi in x, yi in x]; kernel=:b7)

e2 = maximum(abs(itp2(p, q) - runge(p, q)) for p in test_points_interp, q in test_points_interp)

itp3 = convolution_interpolation((x, x, x), [runge(xi, yi, zi) for xi in x, yi in x, zi in x]; kernel=:b7)

e3 = maximum(abs(itp3(p, q, r) - runge(p, q, r)) for p in test_points_interp, q in test_points_interp, r in test_points_interp)

push!(errors[1], e1); push!(errors[2], e2); push!(errors[3], e3)

@printf("%-6d %-14.2e %-14.2e %-14.2e\n", n, e1, e2, e3)

end

println("order " * join([@sprintf("%-14.1f ", convergence_order(errors[d][1], errors[d][end], ns_interp[1], ns_interp[end])) for d in 1:3]))

println()

# Integration: up to 5D, GL reference

println("Antiderivative convergence (kernel=:b7, derivative=-1 per dim)")

println("Reference: Gauss-Legendre quadrature (n=20 per dim)")

@printf("%-6s %-14s %-14s %-14s %-14s %-14s\n", "N/dim", "1D error", "2D error", "3D error", "4D error", "5D error")

errors = Dict(d => Float64[] for d in 1:5);

for n in ns_integ

x = range(0.0, 1.0, length=n)

errors_n = map(1:5) do D

knots = ntuple(_ -> x, D)

vals = [runge(ntuple(d -> knots[d][i[d]], D)...) for i in Iterators.product(ntuple(_ -> 1:n, D)...)]

itp = convolution_interpolation(knots, vals; kernel=:b7, derivative=-1)

ref = gl_integral(D)

# evaluate at upper corner (1,1,...,1) gives the full integral over [0,1]^D

abs(itp(ntuple(_ -> 1.0, D)...) - ref)

end

for (d, e) in enumerate(errors_n)

push!(errors[d], e)

end

@printf("%-6d %-14.2e %-14.2e %-14.2e %-14.2e %-14.2e\n", n, errors_n...)

end

println("order " * join([@sprintf("%-14.1f ", convergence_order(errors[d][1], errors[d][end], ns_interp[1], ns_interp[end])) for d in 1:5]))

println()

Hi again lxvm ![]()

I now have a more precise response!

First of all: There was an error in my script above! Notice I used ns_interp when calculating the integration rate, I should have used ns_integ!

Second: I found that the convergence order improves specifically for symmetric integrands. The improvement is dramatic: From 7th order to 12th order. ![]() You can see this by shifting the Runge function in the code above to be symmetric around (1/2)^N:

You can see this by shifting the Runge function in the code above to be symmetric around (1/2)^N:

runge(x...) = 1 / (1 + 25 * sum((xi - 0.5)^2 for xi in x))

Updated results:

Non-symmetric integrand convergence (roughly 7th order):

Antiderivative convergence (kernel=:b7, derivative=-1 per dim)

Reference: Gauss-Legendre quadrature (n=20 per dim)

N/dim 1D error 2D error 3D error 4D error 5D error

15 1.38e-04 2.79e-05 4.05e-06 4.57e-07 2.62e-08

25 1.11e-06 4.93e-07 1.19e-07 2.35e-08 4.24e-09

40 2.36e-07 4.26e-08 5.94e-09 7.57e-10 9.10e-11

order 6.5 6.6 6.7 6.5 5.8

Symmetric integrand convergence (roughly 12th order) (GL ref n=40, up from 20):

Antiderivative convergence (kernel=:b7, derivative=-1 per dim)

Reference: Gauss-Legendre quadrature (n=40 per dim)

N/dim 1D error 2D error 3D error 4D error 5D error

15 5.62e-05 3.80e-05 1.71e-05 6.39e-06 2.04e-06

25 1.46e-07 6.51e-08 1.58e-08 2.72e-10 3.07e-09

40 5.52e-10 3.71e-10 1.46e-10 3.20e-11 4.81e-12

order 11.8 11.8 11.9 12.4 13.2

This suggests that symmetry triggers cancellation of odd-order error terms in the tensor product structure, yielding convergence far beyond the nominal 7th order kernel.

Right, picking a symmetric test function often gives you a non-general (in this case improved) result due to special cancellations. Still, you show that the method has only 7th order convergence in the general case so I would recommend that you only claim this as the rate of convergence.

Regarding the lower absolute error of the integral in higher dimensions, it would probably be better to report the relative error since the value of the integral could be changing with dimensions. Even then, the absolute error of the integral is equal to the absolute value of the integral of the interpolation error and there can be cancellations if the interpolation error changes sign. You could compare the integral error to the L1 error if you wanted to find out how important that effect is, however what matters is that the rate of convergence is matching the theory.

Thanks! I will adjust the README to follow your advice, you’re right. ![]()

Hi again lxvm ![]()

Thanks again for your thoughtful response! In addition to my previous response, let me just clarify how the method actually works, since it differs from the standard “interpolate then integrate” pipeline.

The method

Both interpolation and antiderivative evaluation are convolution operations, just with different kernels. For interpolation on uniform points (see for example Keys (1981)):

where K is the interpolation kernel evaluated at integer distances from the desired point and the coefficients c_j are the user’s sampled data.

The kernels

For the antiderivative, the kernel K is analytically integrated to obtain \tilde{K}, with coefficients stored as exact rational numbers. While the interpolation kernel has compact support (zero outside a compact center), the antiderivative kernel has global support (non-zero everywhere but at the origin). Here is a visualization from my package of all the convolution kernels K(s) and their antiderivatives \tilde{K}(s) (notice s is not frequency but time/space/impulse/…):

The key property visible in the lower panel: \tilde{K}(s) saturates to exactly \pm\frac{1}{2}

outside the kernel support. This means \tilde{K} is global, and unlike K, its support is

unbounded. The global support of the antiderivative kernels necessitates a convolution with the full domain:

1D antiderivative \mathcal{O}(stencil) in kernel evaluations:

In 1D, the domain decomposes in 3 line segments. The anchor contribution can precomputed and looked up \mathcal{O}(1). The two end segments can be precomputed and looked up \mathcal{O}(1). Then central kernel piece must be computed \mathcal{O}(stencil), in total \mathcal{O}(stencil), same as interpolation and differentiation in 1D. These are the optimizations done in the package for 1D.

2D antiderivative \mathcal{O}(stencil^2) in kernel evaluations:

In 2D, the domain decomposes into 9 rectangular sections. The anchor contributions can be precomputed and looked up \mathcal{O}(1). The corners can be precomputed as cross sums and looked up \mathcal{O}(1). The center must use the full 2D convolution \mathcal{O}(stencil^2). The four remaining strips are a combination of \mathcal{O}(1) and \mathcal{O}(stencil). The total order of this operation becomes \mathcal{O}(stencil^2), the same as 2D convolution interpolation or differentiation. These are exactly the optimizations done in the package for 2D.

Higher-dimensional antiderivative \mathcal{O}(N^D):

In D dimensions each of length N this tensor product becomes \mathcal{O}(N^D) (the number of coefficients). The anchor contributions can be precomputed and looked up \mathcal{O}(1). The remaining values are calculated. Even though this is demanding, one can exploit that the kernels saturate at \pm 1/2 outside the support region and return early instead of evaluating the actual kernels. This means this sum is effectively \mathcal{O}(stencil^D) measured in kernel evaluations, same as multi-dimensional convolution interpolation/differentiation, but with \mathcal{O}(N^D) overhead from the loop over the full domain (the actual cost depends on kernel size vs domain size).

Further optimizations applied

The kernel evaluations in the support region are expensive, as they are sums of several high-order polynomials. To optimize this, the kernels are discretized in each interval, precomputed and tabulated. This reduces kernel evaluation, the most expensive part of the calculation, from evaluating several polynomials, to just a sorted lookup, two dot products with the data and a linear interpolation. This optimization not only improves speed but also accuracy, since tables are calculated at exact rational precision with zero floating point error. Apart from the interpolation in the kernel evaluation (which can be hermite cubic/quintic or linear), the only approximation is how well the convolution kernel K reproduces the function from its samples, which determines the convergence order (7th order for b-series kernels).

v0.12.0 is out! (after a small 24h package server hiccup, now available via ]update)

The :a0 kernel now supports derivative=-1. A delta-like spike integrates to a perfect Heaviside step:

n = 1001

x = range(0.0, 2π, length=n)

h = x[2] - x[1]

y = [abs(xi - π) ≈ 0.0 ? 1.0/h : 0.0 for xi in x]

itp = convolution_interpolation(x, y; kernel=:a0, derivative=-1)

itp(π + 0.1) # = 1.0

itp(π - 0.1) # = 0.0

This release also fixes a bug so that all kernels can now be combined freely.

Also breaking: bc=:polynomial → bc=:poly. Full release notes here. ![]()

v0.13.0 has been merged and will be available shortly!

The headline feature is a fast 3D antiderivative functor. As described in my earlier post on the 2D optimizations, the key insight is that the antiderivative kernel \tilde{K}(s) saturates to exactly +/- ½ outside the stencil support. In 2D this enables a 9-region decomposition (1 center + 4 edge strips + 4 corners) reducing evaluation to \mathcal{O}(stencil^2). The 3D case extends this naturally but with significantly more bookkeeping: a 27-region decomposition (1 center + 6 faces + 12 edges + 8 corners), where:

- Center: full \tilde{K} \times \tilde{K} \times \tilde{K} triple loop, \mathcal{O}(stencil^3)

- Faces: one saturated tail \times two \tilde{K} loops, \mathcal{O}(stencil^2) each

- Edges: two saturated tails \times one \tilde{K} loop, \mathcal{O}(stencil) each

- Corners: three saturated tails, \mathcal{O}(1) each

The result is \mathcal{O}(stencil^3) total, the same as 3D interpolation/differentiation, with ~1000 \times speedup over the slow path on a 100 \times 100 \times 100 grid.

Additionally, cubic and quintic subgrid interpolation are now enabled for all integral evaluations (previously forced to :linear), improving accuracy by ~2 orders of magnitude at minimal extra cost.

Breaking: :nearest subgrid has been removed. Use :linear instead.

Full release notes here.

v0.14.0 is out with new antiderivative features! ![]()

Key feature updates in this version: Previously, the tail sum optimizations for antiderivative kernels described in the posts above were only applied to pure integrals. The new version adds the capability to handle mixed integrals/derivatives/interpolations efficiently in N dimensions, as long as the number of integral dimensions does not exceed 3. For integral dimensions 4 or above, the package falls back to summing over all grid coefficients at evaluation time, which is less efficient, but still correct and 7th order accurate.

For high order mixed integrals it can help to do ‘nesting’ of the integrals, by evaluating some integral directions first, then building a new interpolator from those integrated values:

using ConvolutionInterpolations

# step 1: integrate in z and w

itp_zw = convolution_interpolation((xs,ys,zs,ws), vs; derivative=(0,0,-1,-1))

# evaluate onto a new 4D grid

int_zw = [itp_zw(x,y,z,w) for x in xs, y in ys, z in zs, w in ws];

# step 2: integrate in x and y

itp_nested = convolution_interpolation((xs,ys,zs,ws), int_zw; derivative=(-1,-1,0,0))

# single 4D call for comparison

itp_direct = convolution_interpolation((xs,ys,zs,ws), vs; derivative=(-1,-1,-1,-1))

This kind of nested combinations can be performed to reduce evaluation time in the dimensions one cares most about varying, and it does not degrade accuracy. In this way the necessary operations can be ‘condensed’ down to those essential for speed for a given application. But for integral dimensions \leq 3 it should not be necessary.

More testing has been added to the test suite:

- Validation of allocation free evaluation for all non-lazy kernels

- Bigfloat preservation tests

- Integral combinations with internal agreement between fast/direct paths.

Full release notes: here.

v0.15.0: convolution_resample, convolution_smooth, convolution_gaussian

Three new functions, type stability throughout, and a breaking rename.

Breaking changes

1. Default boundary condition: :auto renamed to :detect

The default BC symbol has been renamed to better reflect what it does, it detects the best boundary condition based on the data. Users who never set bc explicitly are unaffected. If you’ve been passing bc=:auto, just switch to bc=:detect.

2. Gaussian smoothing split out of convolution_interpolation

The B parameter is no longer accepted by convolution_interpolation. Gaussian smoothing now has dedicated functions with a cleaner interface and much better performance:

# Before

itp = convolution_interpolation(x, y; B=0.05)

# After, smoothed array on the same grid:

y_smooth = convolution_smooth(x, y, 0.05)

# After, interpolant for evaluation at arbitrary locations:

itp = convolution_gaussian(x, y, 0.05)

convolution_resample

High-order grid-to-grid resampling. Significantly faster than constructing a full interpolant and evaluating at each output point, with the advantage growing with dimension:

xs_coarse = range(0.0, 1.0, length=30)

ys_coarse = range(0.0, 1.0, length=30)

zs_coarse = range(0.0, 1.0, length=30)

data = [sin(x)*cos(y)*exp(-z) for x in xs_coarse, y in ys_coarse, z in zs_coarse]

xs_fine = range(0.0, 1.0, length=60)

ys_fine = range(0.0, 1.0, length=60)

zs_fine = range(0.0, 1.0, length=60)

# resample to the fine grid in one call

data_fine = convolution_resample((xs_coarse, ys_coarse, zs_coarse),

(xs_fine, ys_fine, zs_fine), data; kernel=:b9)

| Grid | convolution_resample |

construct + eval | Speedup |

|---|---|---|---|

| 1D 500 to 1000 | 22 us | 29 us | 1.3x |

| 2D 100^2 to 200^2 | 1.9 ms | 7.0 ms | 3.6x |

| 3D 30^3 to 60^3 | 8.9 ms | 127 ms | 14x |

Default kernel is :b9 (higher accuracy than convolution_interpolation’s default :b5). Supports per-dimension kernels and derivative resampling. 1D-10D type-stable default constructors. O(N) allocations independent of grid size.

convolution_smooth

Separable Gaussian smoothing. Takes gridded data and returns a smoothed array of the same size. This is the fast path when you just need clean data on your existing grid.

xs = range(0.0, 1.0, length=200)

ys = range(0.0, 1.0, length=200)

noisy_data = sin.(xs) .* cos.(ys') .+ 0.1 .* randn(200, 200)

# returns a 200x200 array, smoothed in place on the grid

clean = convolution_smooth((xs, ys), noisy_data, 0.02)

1000x speedup over the previous Gaussian path via exp weight hoisting:

| Grid | B=0.02 | B=0.05 | B=0.1 |

|---|---|---|---|

| 1D n=1000 | 22 us | 13 us | 9.6 us |

| 2D 200^2 | 1.7 ms | 0.99 ms | 0.72 ms |

| 3D 50^3 | 9.3 ms | 5.7 ms | 4.4 ms |

| 4D 40^4 | 273 ms | 206 ms | 169 ms |

Adaptive boundary fit based on stencil width, wider kernels use more boundary points automatically. B \leq 0.1 recommended.

convolution_gaussian

Standalone Gaussian interpolant. Unlike convolution_smooth which returns an array, this returns a callable functor that can be evaluated at arbitrary off-grid locations, just like convolution_interpolation.

x = range(0.0, 2pi, length=100)

y_noisy = sin.(x) .+ 0.1 .* randn(100)

# returns a functor, not an array

itp = convolution_gaussian(x, y_noisy, 0.05)

itp(0.3) # evaluate at any point in the domain

itp(1.57) # smoothed value at an off-grid location

Allocation-free evaluation via Val{B} and Val{NT} type encoding. Dedicated 1D, 2D, 3D and ND functors.

Scattered data pipeline

The new smoothing functions combine naturally with RBF packages for a complete scattered-to-structured workflow. Here’s a full example using ScatteredInterpolation.jl:

using ScatteredInterpolation, ConvolutionInterpolations, Plots

# true signal

xs = ys = range(0.0, 2pi, length=100)

true_signal = [sin(x)*cos(y) for x in xs, y in ys]

# scattered noisy measurements

n_points = 1000

points = rand(2, n_points) .* 2pi

values = sin.(points[1,:]) .* cos.(points[2,:]) .+ 0.1.*randn(n_points)

# Step 1: RBF onto uniform grid

itp_rbf = interpolate(NearestNeighbor(), points, values)

grid_rbf = [evaluate(itp_rbf, [x, y])[1] for x in xs, y in ys]

# Step 2: smooth

grid_smooth = convolution_smooth((xs, ys), grid_rbf, 0.02)

# Step 3: plot and verify

limits = extrema(grid_rbf)

p1 = scatter(points[1,:], points[2,:], zcolor=values,

title="Scattered noisy data (n=$n_points)",

ms=3, markerstrokewidth=0, aspect_ratio=1, clims=limits)

p2 = contourf(xs, ys, grid_rbf', title="After RBF gridding", clims=limits)

p3 = contourf(xs, ys, grid_smooth', title="After smoothing (B=0.02)", clims=limits)

p4 = contourf(xs, ys, true_signal', title="True signal", clims=limits)

plot(p1, p2, p3, p4, layout=(2,2), size=(900,800), colorbar=false)

# Step 4: High-order interpolant, evaluate, differentiate, integrate

itp = convolution_interpolation((xs, ys), grid_smooth) # f(x,y)

itp_dx = convolution_interpolation((xs, ys), grid_smooth; derivative=(1,0)) # df/dx

itp_int = convolution_interpolation((xs, ys), grid_smooth; derivative=-1) # int int f dx dy

From 1000 noisy scattered points to smooth contours nearly indistinguishable from the true signal.

Performance

This release achieved full type stability throughout the package:

Ref{Array{T,N}}ping-pong buffers eliminateCore.BoxboxingVal{UG}branch elimination removes allocating empty matrices in BC pathsBoundaryWorkspace{T,1}viaVal(1)for fully concrete workspace types- Type-stable 1D-10D default constructors for all four main functions, verified with

@code_warntype - Lazy evaluation functors (1D/2D/3D) fully reworked, 4D lazy construction: 1320ms to 79ms

- ND pure antiderivative made allocation-free via

nexprs/nloopsmacros

Tests and documentation

1178 tests passing (was 1086). Comprehensive new test suites for convolution_resample, convolution_smooth, and expanded lazy mode coverage. README updated with new sections on smoothing, resampling, and the scattered data pipeline, with all benchmarks based on updated numbers.