By the way, if the particular structure of the problem is typical of your needs, this is a QP problem (a quadratic cost function and linear equality constraints). As such it can be solved without a general optimization package just by using a solver for linear equations (look the KKT matrix up if you are not familiar with it, for example here).

Here comes a code

# Parameterization of the quadratic cost function 1/2 x'Qx + r'x + c

Q = [0.0 1.0; 1.0 0.0]

r = [1.0, 2.0]

c = 2.0

# Parameterization of the linear constraint Ax = b

A = [4.0 6.0]

b = 130

# KKT linear system

K = [hcat(Q,A'); hcat(A, 0)]

d = vcat(-r,b)

y = K\d

The maximum is at

julia> xopt = y[1:2]

2-element Vector{Float64}:

16.0

11.0

That this is truly a maximum can be verified by checking negativity of the projected Hessian, which in this case can be computed using

julia> Z = nullspace(A);

julia> Z'*Q*Z

1×1 Matrix{Float64}:

-0.923076923076923

We are lucky here because the Hessian of the uncostrained cost function is actually indefinite (the matrix Q has one positive and one negative eigval) and the unconstrained problem is unbounded. But for the contstrained problem we have a (unique and bounded) maximum.

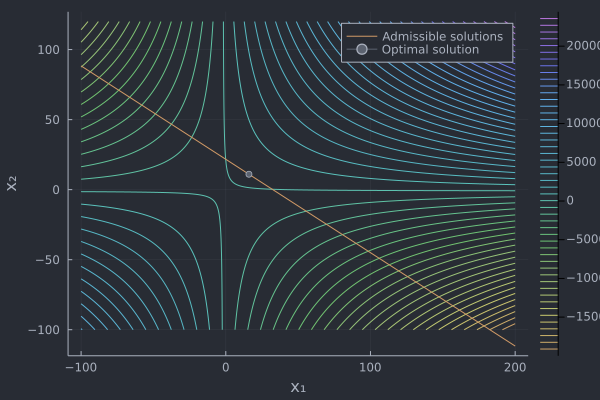

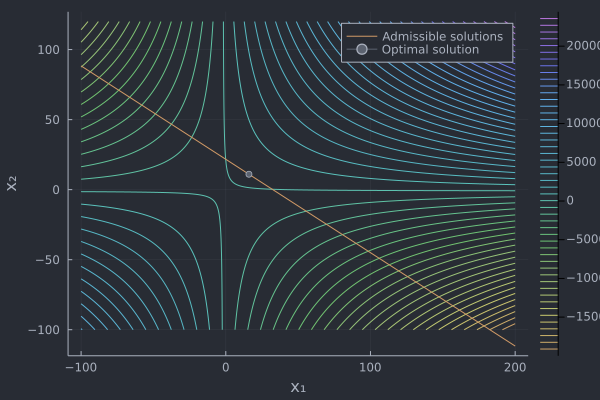

Finally, some plots

# Plotting the cost function, the constraint and the optimal solution

using Plots

f(x₁,x₂) = (x₁+2)*(x₂+1)

x₁ = range(-100.0,200.0,length=500)

x₂ = range(-100.0,120.0,length=500)

plot(x₁,x₂,f,st=:surface,xlabel="x₁",ylabel="x₂",zlabel="f(x₁,x₂)")

contour(x₁,x₂,f,xlabel="x₁",ylabel="x₂",levels=50)

x₂sol(x₁) = (130.0-4*x₁)/6

plot!(x₁,x₂sol,label="Admissible solutions")

plot!([y[1]],[y[2]],markershape=:circle,label="Optimal solution")